This article also appears as a video on the Modern Software Engineering Channel.

Developers have been using the Test Pyramid forever to decide what kinds of tests to invest in. This model has also been much criticised as not giving terribly helpful guidance. In this article I’m going to look at what developers actually use tests for, and go through a better model to inform your test strategy – freshly updated: Test Desiderata 2.0.

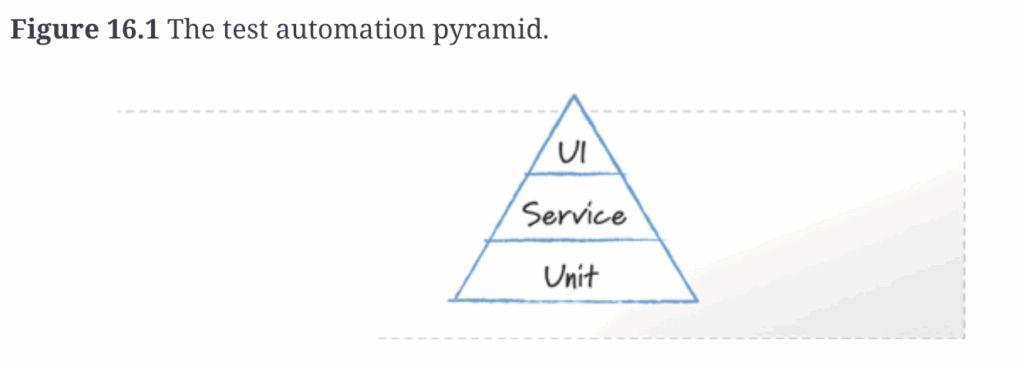

The test pyramid was originally introduced by Mike Cohn, in his 2009 book “Succeeding with Agile”, and it looked like this:

He explains his reasoning. Unit tests are great because they help programmers diagnose problems and they are easy to write. UI tests though, are brittle, expensive, and slow. Service tests are good because they are written from a customer perspective, so they find important issues, yet bypass the awkward and slow user interface.

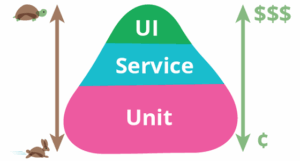

This ‘test pyramid’ model became pretty popular in agile circles, and Martin Fowler later re-drew it with the tradeoffs explicitly shown:

image from Martin Fowler’s bliki

Unit tests are fast and cheap, UI tests are slow and expensive. Service tests in between.

I think this is a huge improvement because it changes the conversation from – Is this a unit test or a service test? – to – Is this test fast and cheap enough? The kind of test is much less interesting than the desirable properties it gives my test suite as a whole.

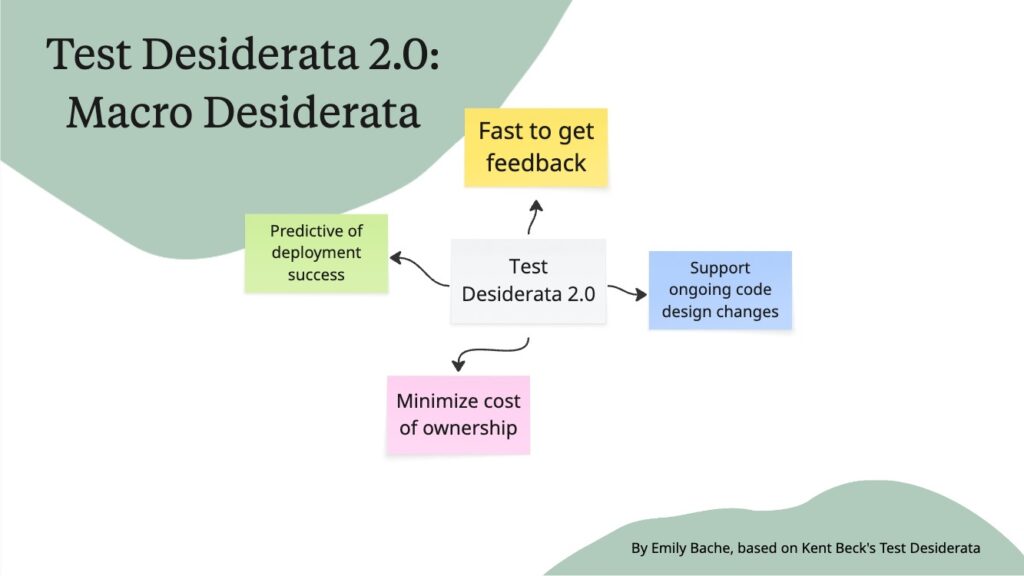

If you compare this with my Test Desiderata 2.0, Fast and Cheap are two of the macro properties of a test suite. Desiderata means desirable properties and I have another article about how I came up with it. This model doesn’t mention kinds of test like Unit, Service or UI. That is not an interesting dimension for me. I’ll come back to the other two properties I value, but first let me explain my reasoning here.

The test pyramid makes an assumption that granularity, how much of the application is being tested, is directly correlated to the speed and cost of the test. That doesn’t have to be true. I’ve worked with systems where the “unit” tests were hopelessly slow, and other systems where the end to end tests through the UI were fast enough to support refactoring. These two properties of speed and cost are actually more related to the design and architecture of the system than the precise label the kinds of test have. These labels are also really confusing for people.

Confusing labels – unit, service, UI

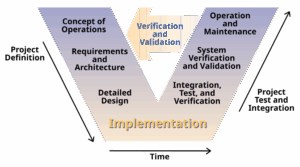

What even is a ‘unit’ test? Before JUnit came out, unit tests were generally manual, and performed by a different group than the programmers who wrote the code for that unit. It was all part of a waterfall process, often using the “V” model for testing – a very different concept from the very lightweight tests that Kent Beck and other XP enthusiasts were proposing.

The ‘V’ model basically says that during the analysis and design waterfall phases you outline the kinds of tests that will be needed at different levels, starting with the system level and going down to the unit level, then in the construction and verification phases you go back up the other side, first coding and testing the unit tests, then the integration tests, and finally the system tests at the end of the project. This model is basically the opposite of an agile or iterative approach to software development.

As ever, when people are presented with a new concept they have no experience of, like JUnit, it’s likely they will reinterpret it to mean something they already understand and know and are doing. It’s even worse if you use a term like ‘unit test’ that already meant something different.

Then don’t get me started on what is a ‘service’ test? Most people just assume it’s a kind of integration test, since that’s what happens in the V model between unit tests and system tests. The middle layer of the test pyramid has come to mean anything from a millisecond-speed test written in JUnit that happens to test more than one class, up to something that tests a whole microservice including database, kafka queues and html.

That’s not what Cohn originally described. Actually, the key feature of ‘service’ tests is that they are written from the customer perspective. This is a key concept in Behaviour Driven Development, emphasizing the role of the test in specification or intent. It’s not at all equivalent to an integration test. I’ll come back to that shortly.

Key takeaway of the test pyramid

These criticisms of the test pyramid are not new, I’m not the first to point out the really the big takeaway from the test pyramid is that most of your tests should be fast and cheap, and only add the slow and expensive tests you really need, you can’t afford too many of them. Most of the time tests through the UI are slow and flaky and annoying, but we put up with them because they find interesting bugs.

Which brings me to my Test Desiderata 2.0 model. As well as fast and cheap, a third macro property you want your test suite to have is, predictive of deployment success. You want the tests to find interesting bugs before you go to production. Some bugs don’t show up unless you do have the full GUI up and operational. That’s not necessarily the same as having the whole system up and running though. The test pyramid conflates tests through the GUI with end-to-end tests. In my model I instead put the focus on: what kinds of risks does each kind of test address, and is there a cheaper and faster way to get that. It’s explicit, you trade off these three properties: speed, cost and predictiveness.

Support ongoing code changes

The fourth macro property of a test suite in my model is support ongoing code design changes. I almost didn’t put this in – many developers totally ignore this benefit you can get from a test suite, because it changes the way you work with the code.

In software we are continually changing the design as we add features and improve performance and refactor for maintainability. When you’re doing TDD or BDD then the tests become a first order driver of the design and they influence the way you decompose the problem and do software engineering.

Writing the tests first means you document your intent for what the piece of code is supposed to do, before the implementation exists. You also get this positive design pressure towards more usable and testable APIs.

If you write the tests afterwards, you are more likely to end up with larger granularity tests that instead use mocks heavily to compensate for a poor separation of concerns in the design. These tests are more brittle in the face of refactoring, so more expensive to maintain.

Support to achieve fast, cheap, predictive and more

Using the tests to inform design before the code exists will help you to get a test suite that achieves the other three macro desiderata – cheap, fast, predictive, but it’s more than that. Tests written with intent also act as documentation.

When you come to a bit of code you didn’t write, you can read the implementation, and probably work out what it does, but not necessarily why it is that way. Tests written with intent will explain the perspective of the customer or user of that code.

AI tools are generally bad at documenting intent

Unfortunately AI tools are generally bad at this. They can examine some code and generate tests for it that will be sensitive to the behaviour of the code – fulfil the ‘predictive’ desiderata. They can use mocks to make the test fast to execute. They are cheap to maintain if the tool can update them. The fourth macro property is really where these tests fall down. Why do all those cases matter – what is this all for. You can’t see that in the implementation alone, you need to understand the customer or user perspective.

You can include the customer perspective in your prompt, and that will probably help. At some point though you run into the brick wall which is the vast majority of tests in the training data are not like that. It’s hard to get AI tools to do something that’s largely outside of their training data. It’s worth trying though.

Conclusions

The Test Pyramid was much improved by Martin Fowler when he added the two axes of speed and cost that emphasize why you want more unit tests than the other kinds – they are fast and cheap. My Test Desiderata 2.0 adds two additional dimensions that you care about, which perhaps were implicit in the test pyramid, but mostly misunderstood. Your whole suite needs to be predictive of success in production and support ongoing code design changes.

All four of these goals are easier to achieve in a TDD or BDD process where you write good tests with intent. Writing tests from a user or customer perspective was mentioned in Mike Cohn’s description of the original test pyramid but it got lost and ignored in this discussion “how big is a unit” and “what even is an integration test”. I’d like to shift the emphasis back to benefits over test type definitions in the Test Desiderata 2.0 model.

It is a better starting point for evaluating your existing test suite and informing your test strategy.

Happy Coding!