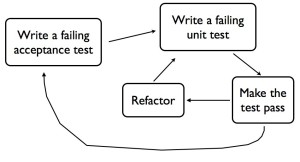

This is the third post in a series about London School TDD. The first one is here, introducing the topic. The second post discusses “Outside-In Development with Double-Loop TDD”. In this post I’d like to talk about the second difference I see between Classic and London School TDD, which is to do with your style of Object Oriented Design.

“Different design styles have different techniques that are most applicable for test-driving code written in those styles, and there are different tools that help you with those techniques…

That’s what we … designed JMock to do …

“Tell, Don’t Ask” object-oriented design.”

— Nat Pryce, in an email to a discussion forum.

That quote explains the objective Nat et al had when designing JMock, and I think it shows that London School TDD is actually a school of design as much as a testing technique. Let’s take a closer look at this way of designing objects.

Tell, Don’t Ask

“Tell, Don’t Ask” Object Oriented Design is about having Cohesive objects that hide their internal workings. If your objects obey the Law of Demeter, that’s a good start, it means they hide their inner workings and don’t talk to objects far away on the object graph. It reduces Coupling in your system, which should make for better maintainability.

In their book “Growing Object Oriented Software, Guided by Tests”, Freeman & Pryce actually define “Tell, Don’t Ask” as the same as following the Law of Demeter (p17). Then they go on with several chapters about their design style, expanding far beyond simply “following the Law of Demeter”. It’s well worth a read, here’s a sample:

“… we focus our design effort on how the objects collaborate … obviously, we want to achieve a well-designed class structure, but we think the communication patterns between objects are more important.”

— Freeman & Pryce, GOOS, p58

Message passing vs Types with Data

So it’s basically about how you view your objects. Do you see them primarily in terms of sending and receiving messages to other objects in order to get stuff done? Or are you more focussed on the data your objects look after and the class of objects they are part of?

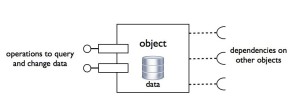

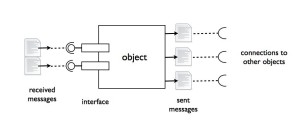

In the diagram below you can see an object is defined in terms of which messages it sends and receives:

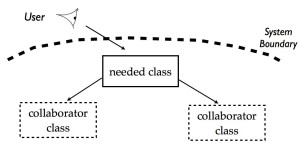

This diagram shows the same object, but with a focus on data rather than messages:

If you have a “message” focus, you’ll be concerned with defining protocols and interfaces. You’ll worry about which collaborators will be needed to process a particular message. If you have a “data” focus, you’ll be interested in checking your object goes through particular state transitions. You’ll check it makes correct calculations based on its data, and hides whether the result is cached or calculated.

In my first post I talked about the three ways to verify object behaviour. In Classic TDD, the most popular way to write your assert is to check the state of the object you’re testing, or a collaborator, using a public API. This naturally leads you to design objects that are more type-oriented, with the emphasis on class relationships.

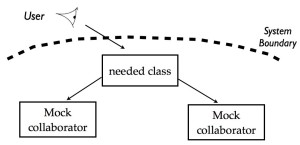

In London School TDD, the favoured way to write your assert is to use a mock and check a particular interaction happened, or in other words, a particular message was passed. This is because you favour a design where objects don’t reveal much at all about their data – your system is all about the interactions.

Dependencies and Collaborators

In a message-oriented design, it’s natural to want to specify which collaborators a particular object needs in order to get something done, and what messages it will send them. It’s part of the public specification of an object, and natural to pin down in a test case using mocks. If you instead check your object via a method that lets you query its state, it could expose details that might stop you refactoring the internals later. This leads you to prefer to check your messages, rather than state and data.

If you have a more type-oriented design, you may want to hide the fact you’re storing data accross several objects, or delegating certain calculations to other objects. Those dependencies aren’t part of the public specification, what matters is the end result. If you start exposing these interactions in your test via mocks, you’ll end up with brittle tests that hinder a subsequent redistribution of responsibilities between an object and its dependents. This leads you to prefer to check state and data, rather than interactions.

Comparing the two styles

In these articles I’ve tried to draw each style of TDD to an extreme in order to emphasize the differences. Of course, in practice, a competent developer will use the style most appropriate to the situation she finds herself in. She may use both styles while developing different pieces of the same system. In my next post, I’d like to illustrate this with a small example.